Copyright © 2020 Mark Pilkington

Synergies (2020)

Duration: 6'15

Format: Code

Synergies (2020) is a collaborative piece by Dr. Mark Pilkington (Thought Universe) and Dr. Oliver Carman (University of Liverpool) that investigates the integration of sound and images in the composition of electroacoustic music. Using code to form an animated graphic score to represent sounds derived from the human voice.

Description of Sound materials

Initially sound resources were recorded and created in response to a number of sketches drawn by Mark. The organic shapes and the human presence within the drawing naturally drew towards the human voice. Having worked with vocal sounds before I knew a variety of breath, throaty sounds, tongue clicks would respond well to sound processing. Aware that the AV element would also include visual coded in processing I opted for a second source - material process by a simple generative patch in VCV Racks (the sounds used ended up being 1 - 2 seconds of an FM synthesis patch).

Initial Steps

To begin with the sound resources were processed to create a sound library for the new work, always trying to keep the sketches in mind. Transformations tend to fall into a number of categories each moving further away from the initial source material (much inline with Smalley’s gesture surrogacy concept)

Transformation of pitch, reversing, equalistation, compression. (basic sound processing with DAW), compositing materials into sound objects for further processing.

Cross synthesis (Cecelia) vocal sounds and FM sounds combined to create crossover between organic and digital.

Extensive granular processing, filtering.

Starting at step 1 again but with the newly processed materials.

Development

Selection and synchronisation of existing sound materials to a short 45 second video that has been created in Processing and provided by Mark.

At this stage it is already clear to me that a number of materials stand out from the sound library that will be suitable for the video.

Sound processing at this point tended to be modifications to make materials represent the image more effectively i/e extension of resonant properties to a sound through granulation, changes to envelope or panning to reflect placement or movement of an image.

A subsequent 1 minute of material was then composed based on developing these opening materials. This was then sent back to Mark for the video to be created. This process continued back and to through the duration of the creative process.

1. Synergies (2020) - audio

Text by Dr. Oliver Carman - May 2020

Visual Thoughts and Processes of Synergies

Sketching as Meta-model

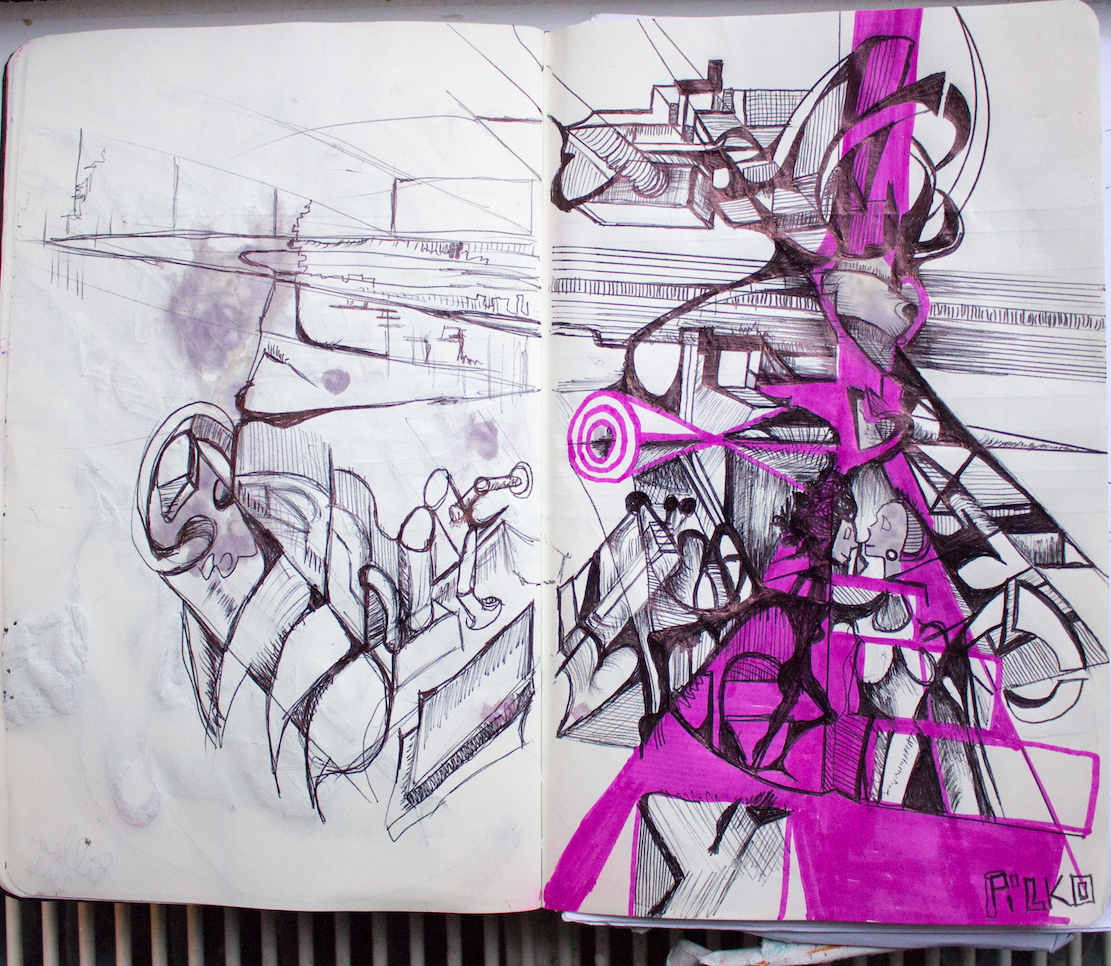

The visual process started as a hand drawn sketch (fig. 1); conceived without a specific concept in mind, purely expressing my own thoughts and feelings. To begin with the pen moved freely across the paper revealing trajectories into an imaginary world. As the marks coalesced, consciousness folded in on itself to highlight a structured moment in time. Sketching in all its forms can be considered as diagrammatic thought ideas, a form of 'metamodeling' to present an alternate way of thinking and writing. The process of 'metamodeling' as suggested by the french philosopher Felix Guattari's is a process to discover new paths between concepts and diagrammatic forms (Watson, 2009, p. 7). The way ideas can be embedded in a sketch share many similarities to computer data. Both offer the user infinite possibilities of calculation across real and virtual space. In contrast, the originality and purity of a hand drawn sketch stands in stark contrast to the vast number of digital images. There is a concern that if left unchecked computer imaging will form an image of the world forged through a 'biasing' of gender and race. The sketch questions hierarchical arrangement of structural information by consisting of both bottom-up and top-down approaches.

Fig.1 The original hand drawn sketch for Synergies.

Animating with Code

The original sketch (fig. 1) was animated using the visual language Processing to form a temporal framework for Oliver to align his vocal treatments. Processing is a flexible software sketchbook and a language for learning code within the context of the visual arts. Whilst listening to the audio I programmed a series of dynamic visual processes that followed the sounds morphological structure. By listening carefully to the sound material I became acquainted with movement as a perceptual correspondence. According to Michel Chion, there are three modes of listening causal, semantic, and reduced (Chion, 1994. p. 25). The modes refer to different constituents of meaning-creation in the process of listening.

Firstly, the duration of the sound file was mapped to the resolution of the screen. The sound file was embedded into a sketch using the minim API sound library. Visual cues were meticulously plotted to follow the duration of the sound file. The temporal framework provided a method to measure motion and align the spatial position of each visual entity. The process of coding continued until a state of equilibrium occurred between the visuals and audio elements. The piece exists as a self-contained application (fig. 2).

Fig 2. A screen grab of Synergies (2020).

Live Performance

Synergies (2020) is performed as a standalone application (macOS, Windows and Linux) linked to additional sound devices and projected across multiple-screens. During a live performance the algorithmic system is in a continuous state of flux, taking on many different forms depending on spatial information received from the user.

Fig 3. Online performance video of Synergies for Art in Flux 2020.

Conclusion

In essence, sound challenges the virtues of vision by altering the listeners perception to what constitutes a musical experience. Within the realm of music composition sound provides a way to challenge the virtues of vision. When music is combined with the application of algorithmic processes it forms an ecological structure codependent on its environment. Music is a medium of communication with the ability to extend our relationship with oneself and others.

In terms of the collaboration, the most important aspect to arise was being able to share and interact with Oliver. It helped establish a mutual link to form an open dialogue to develop and envision the work. Working in collaboration is a process of communication, analysis and implementation of artistic ideas. Highlighting collaborative learning as a process of adapting to changes with encouragement and support.

As a standalone app, the piece transcends boundaries to reach audiences beyond the constraints of physical space, providing the broadest form of communication with unlimited access to interactive playback on fixed and mobile technologies.

Fig 4. A p5js sketch.

Bibliography

Chion, Michel, Audiovision: Sound on Screen, New York, 1994.

Watson, J, Guattari's Diagrammatic Thought - Writing between Lacan and Deleuze, Continuum, London and New York, 2009.